Hi,

I have several doubts of how to reuse some of the baseline algorithms provided by OpenAI.

In the tutorials, I can see that we have examples using SARSA and QLEARNING.

In the case we would like to use PPO or DDPG or TD3, what are the steps to follow ?

The implemented showcases of native gym work with CNN (extract features from pixels) as function approximators.

In the case of ROS + openai_ros package, how shall we proceed? Is it planned to provide some example of how to use the advanced RL algorithms (that use ANN ) such as DQN/DPG ?

I saw this project

but I think they don’t use the open_ai package

regards

Sugreev

Have a look at the course on Deep Learning with Domain.

Here you train with more complex algorithms ;). And you also learn some nice skills useful fro training in simulation.

Thanks a lot … the course you mention is the next step for extension of Qlearn and Policy Gradients algo with ANN/CNN as function aproximation and Object detection.

I have also another question related to the the other robots used for openai.

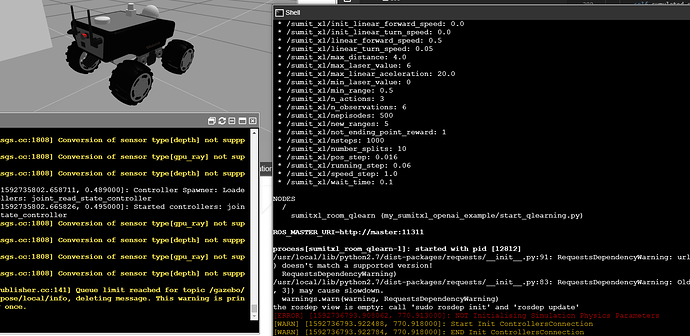

I’m trying to test QLearn algo wtih SumitXL openai_ros example with ROSDS course and i’m getting the following issue.

The robot is instantiated but it doesn’t move

It seems that the physic engine of GAzebo has a problem ? Is it due to the Gazebo version ?

thanks